Yesterday I established the basis for knowledge mapping, namely to ask a meaningful question in a meaningful context. I promised that I would place that into a wider programme which, in the language of our new Hexi approach, is called an assembly. Astute readers may also have spotted a developing theme in both the banner pictures and the opening picture. The former takes books and libraries as a theme and gradually moves through more structure to a card index. A form of metadata that may be unfamiliar to younger readers but which had high utility in its days. I’ve argued that one of the key aspects of knowledge management is creating channels through which knowledge can flow, regardless of whether you know what it is or not. So a knowledge map should not only tell you what the various features are but also the nature of the landscape.

Yesterday I established the basis for knowledge mapping, namely to ask a meaningful question in a meaningful context. I promised that I would place that into a wider programme which, in the language of our new Hexi approach, is called an assembly. Astute readers may also have spotted a developing theme in both the banner pictures and the opening picture. The former takes books and libraries as a theme and gradually moves through more structure to a card index. A form of metadata that may be unfamiliar to younger readers but which had high utility in its days. I’ve argued that one of the key aspects of knowledge management is creating channels through which knowledge can flow, regardless of whether you know what it is or not. So a knowledge map should not only tell you what the various features are but also the nature of the landscape.

The various pictures of pebbles relate to the issue of granularity, the key to complexity and to effective knowledge management. Getting the level of granularity is key and this process focuses on that. The pebble pictures have also seen progress in structure with some loss of the human element from the start and now a separation between soil and pebbles. Lots of potential meaning there which I will not spell out.

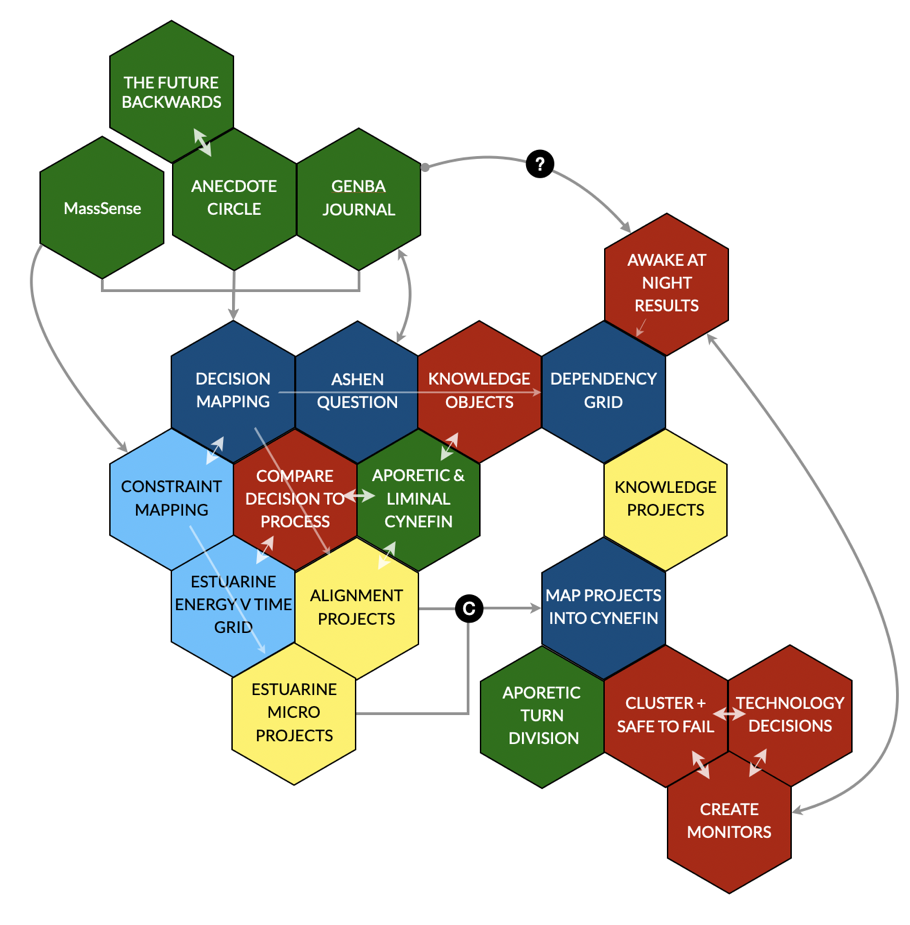

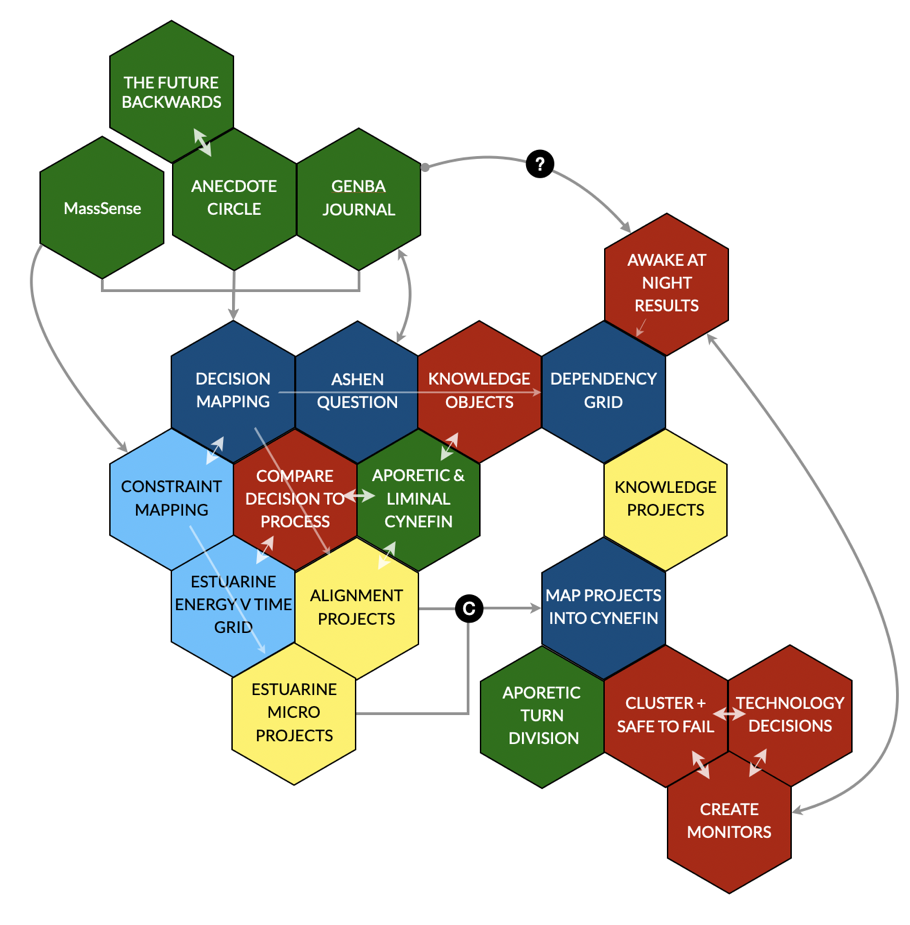

My plan is to build this in three phases namely discovery, sense-making and action. Once that is complete I’ll look at how we might extend the process to do some more work in parallel as a demonstration of the flexibility of a methodology that is object orientated in nature. I’ve shown the full picture at the bottom but, as I would build it over time with the client I will take the same approach here. But you might want to check out the colour coding.

I could do this with the physical Hexi kit and I did that on the kitchen table when I was getting the material ready. But for the purposes of this blog, I have stylised it somewhat. I’ll also use extracts from the big picture for each paragraph of explanation to allow the whole thing to build.

The overall goal here is to create a portfolio of pragmatic projects bottom up rather than starting with a grandiose and idealised, if well-intentioned, plan to become a knowledge-orientated company. If you do that you will always disappoint. We see the same thing with the Agile community which is going through a very similar life cycle to that of KM. This approach, with very little adaptation, could be used to start the journey to agility, building on where we are, with a sense of direction but open to novelty on the pathway. And while I am at it, the same applies to Organisational Development and Change or anything with the misfortune to have the Transformation word attached to it. If I was working with OD then I would keep decision mapping but make some alterations to ASHEN, if with Agile then I would use distributed ethnography around user needs along with entangled tries instead of decision mapping and ASHEN but otherwise all the options would stand. The whole point of Hexi is reuse and trans-initiative integration rather than multiple siloed initiative within a utopian paradigm.

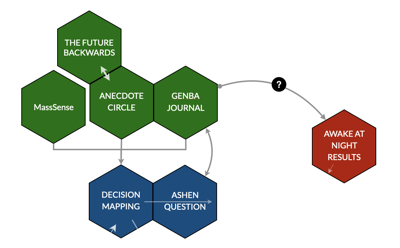

Discovery Phase

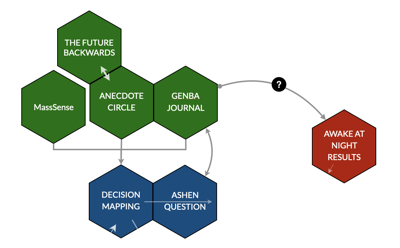

Here we have two parallel activities. One sees to gather decisions (to set the meaningful context) and also to ask the ASHEN question. The second seeks to discover matters of concern to senior and primarily middle management in a larger organisation but it isn’t necessarily confined to that, it could be the whole of the organisation if needed.

Three methods are available for this overall process and I’ll describe each briefly but you can go to the open-source wiki for more material if needed:

- Anecdote circles, which can be physical or virtual and which can use alternative timelines or side-casting methods such as the the Future, Backwards . The goal is to gather anecdotal material about actual projects and activities that happened in the past, as well as fictional stories of what might have happened or might happen in the future. Fiction is as good, and often a better disclosure technique than seeking facts.

- MassSense which is a SenseMaker® application that can present material to stimulate multiple respondents or simply ask them for ideas. In this context, we have shown a map of key processes and used that map as a signifier in SenseMaker® to support material entered and interpreted by each respondent.

- Genba, the big new thing in SenseMaker® allows journal-based capture of decisions, observations, and ideas etc. etc. It can be used as a once of technique to gather decisions or as part of a continuous process. It is both a journal and a tool for journalists so people can interview other people about decisions. That technique works well with senior management and high-status professionals who will respond to an apprentice but less so to a consultant and don’t think they have time to maintain a journal

All three can of course be used in combination or in different sequences. Once this is complete we move into decision extraction and identification of various aspects for each decision cluster:

- Information received (and that we would have liked to receive)

- How the decision is communicated (and how that communication might have been improved)

The decision clusters are then given a name and placed in a brainstorming area (virtual or physical, if virtual we use concept mapping software) and they are sequenced and connected. Information from one decision will be information into another and so on. If a decision doesn’t connect to another decision or a start and end point then we have missed something so we can do and hunt it down. All of that becomes a very messy map, to is to use my phrase messily coherent.

As we identify the decision clusters we also ask the ASHEN typology question and gather the results of that on another work area, randomising them in the initial placement

In parallel with all of this, we go to the managers or those with funding, or everyone (that depends a little on context) and find out what are the current strategic and operational issues that are keeping them awake at night. I like to gather those from people individually and then cluster and compare rather than use a workshop. Obviously, if said management will use the Genba system or respond to the MassSense the results are improved and the overall process is more efficient but you may need to go and interview them.

One of the nice things about both software methods for discovery is that they not only scale, but also allow us to capture a lot of the elements for decision mapping

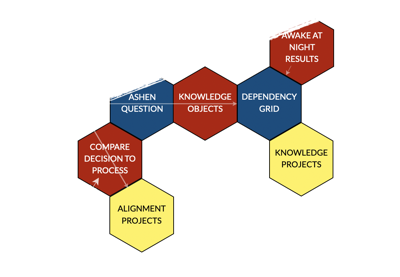

Sense-making Phase

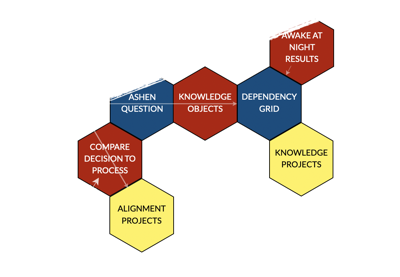

So we enter this phase with a decision map, a mass of ASHEN elements and a list of things that matter to whoever is the effective sponsor – might be middle management, leadership or the wider workforce or even external stakeholders (not wild about that term by the way but that is for another day). From this we end up with two parallel work-streams:

Stream one: reality check on the process (vertical on Hexi map)

- We take the decision map, which rather represents a room where spiders have been spinning and abandoning webs for years and compare it with the process map for the organisation; something that looks rather like an engineering diagram. If you don’t have that then sit down with Executives and get them to describe how they think the process works, draw it up and get their endorsement. It was a lot easier went years ago as that was at the height of the BPR fad and all organisations had a process chart. You can use systems dynamics mapping here as well as working with executives as it produces similar results. I normally do this using very large sheets of paper on two walls in a room, how we think it happens on one side, how it actually happens on the other.

- If the differences are so great that meaningful comparison is impossible then I would abandon the ‘how we think it is’ more or less completely and look at how we can stabilise and consolidate current reality to create something with more transparency and scalability.

- Mostly it isn’t that bad but there will be a set of practices which require that treatment. In other cases, the reverse may apply, and the failure to follow the process may be a result of passive-aggressive resistance to change which will need a mix of enforcement and understanding. That may in turn mean a meeting in the middle, a set of amendments or exception-based decisions. We work on that with distributed decision-making in which combinations of roles have the authority to change any formal process and even break it, subject to transparency. More on that in future posts by the way.

- It may be useful to introduce Cynefin here, especially the liminal domains between complex and complicated and the aporetic domain. Often we need transitionary or disruptive projects. I’ve added this into the big picture below but not here as I want to keep it simple, but not simplistic.

- All of that results in a series of action forms (we have or will have all forms in downloadable form as part of the Hexi initiative but just writing stuff on post-it notes is good enough or you can use the Hexi cards which will be available soon. The advantage of the Hexi shape is that it improves clustering but that is for the action stage. All of those forms are randomised onto a workspace for later use.

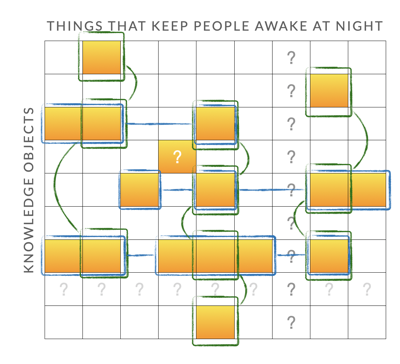

Stream two: creating the dependency grid (horizontal on Hexi map)

Stream two: creating the dependency grid (horizontal on Hexi map)

- The various ASHEN elements need to be clustered into Knowledge Objects. I’ve played with different names over the years but that one stuck. The way I do it is to ask people with experience in the domain to cluster all the ASHEN elements into groups of things that can be managed as a coherent whole. Remember here that ASHEN is a typology so your lists should not say that this is an artefact or a skill, but just say what the question revealed. I’ve had lots of problems explaining this to those involved in procurement over the years, but I’ve never had a problem with getting people to do it successfully which probably tells you something.

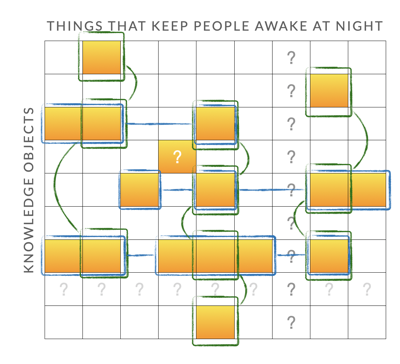

- The Knowledge Objects are then placed on the vertical axis of a grid in no particular order but they are tagged based on an assessment of the vulnerability to loss against impact. Again if we are using SenseMaker® we can automate it and hide it, but it’s perfectly possible to do it in a workshop. High vulnerability low impact no issue; High vulnerability high impact and priority and so on but that is for a little later.

- The things that keep people awake at night then form the horizontal axis and impact is the main tag

- We then distributed the grid and get people to score dependencies – I normally use a 0-5 scale or simplify it to high dependency only. This can be a spreadsheet distributed to thousands and then the mean scores are used to indicate high dependency (gradated yellow on the picture) or in a workshop, it can be done with simply voting, discussion in parallel with comparison and so on.

- Now we look at the picture and cross our eyes. I’m not joking it’s a long-standing technique to disrupt perception and sense new patterns. What we then see are two patterns, horizontal (one knowledge object impact multiple impacts) or vertical (one issue requires more effective management of multiple knowledge objects). Each of those patterns is a project and they have different characteristics Vertical is more difficult to execute but generally has a single owner, horizontal is easier to execute but ownership and definition of success are more difficult. Each may require some secondary work and we will reflect that in the new forms which will be available for the cohort sessions, the first of which is starting soon. You can so some of this clustering with software by the way.

- We then look for other patterns. An issue with no dependencies may be that we need to acquire knowledge we don’t have; a knowledge object with no dependencies may be surplus to requirements or maybe a neglected opportunity. Single or limited dependencies require more attention and they are rarer than you would think.

- So we have another portfolio of projects but we also have a built-in prioritisation. High vulnerability, high impact etc.

As a general note here, the approach scales. In the Bank of Thailand, we had multiple spreadsheets and the overall dependency for thousands of rows by thousands of columns. In a two-day session with the British Treasury (that ended up with the Permanent Secretary staying for the whole process as he was fascinated), we had a single matrix projected onto a screen. Granularity scales as you can assemble from the same source material at people’s capacity to act: a key principle of anthro-complexity.

Action phase

Action phase

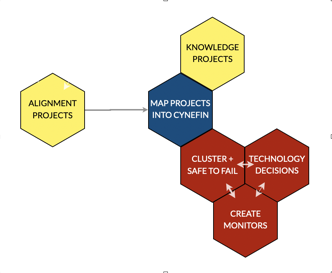

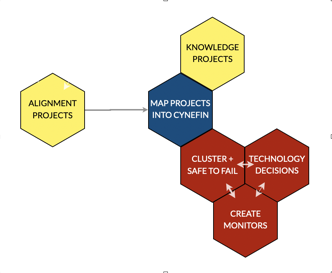

So we now have a mass of small projects, each of which is tangible and linked to a clear need. No platitudes, no idealism just plain pragmatism. Some of the projects may contradict others and that is fine, we won’t know who was right until we engage. So the stages are fairly straightforward:

- We cluster the projects and then map them onto Cynefin and they will either fall into a domain or in the liminal areas between domains. In some cases, they may (per the aporetic turn in the EU Field Guide) be in the centre and require further division. Again I’ve shown this on the big picture but not at this stage.

- Clustering into Cynefin will result in ordered projects which just need to be executed, complex projects which require parallel safe-to-fail experiments and domain transition projects that require constraint modification. Again expect more forms here as providing structure as scaffolding helps.

- We then look at common practices – for example, if data capture is needed from employees then the deployment of SenseMaker® Genba might service multiple projects. We might need a set of Communities of Practice, we might need search technology. The point is we don’t start with technology solutions those come last.

- We then create monitors per project cluster or project assembly, those may be outcome-based or vector based and the rhythm of their use may be different along with the consequences of success or failure. At this level, we would expect significant levels of failure in the short term to increase learning and thus resilience. A top-down knowledge programme is far too vulnerable to failure.

Finally what we are really trying to achieve here is to create a continuous scanning and response process in which the maps change in near real-time rather than being a once-off technique. Knowledge is dynamic, it changes with context and humans, with their capacity for abduction, are really good at handling uncertainty with the right scaffolding. We just don’t build systems which utilise that capacity as we attempt to eliminate rather than navigate complexity.

Going further

So that gets us to the big picture and I have been adding to, and amending this as I wrote the above. The main addition I have made is to add in Estuarine Mapping as this can be done as part and parcel of the same exercise and may be a good way to find things that should be keeping people awake at night. The endpoint is another portfolio of projects. Granularity, the ability to fail and the enablement of emergence – the heart of complexity.

Everything together

Colour coding:

- Green are optional methods/tool that can be used stand along or in combination

- Blue (dark and light) represents formal Cynefin methods, the light blue are optional extras

- Yellow represents micro projects

- Red represents workshop of consultancy processes

- The black dot with a C indicates combination or connection

- Double white arrows indicate interaction

- Single arrows represent interaction between processes or method, they are not shown where it is obvious

The banner picture is cropped from an original by Erol Ahmed on Unsplash. Pebbles and soil is by Andrew Kim both on Unsplash

Yesterday I established the basis for knowledge mapping, namely to ask a meaningful question in a meaningful context. I promised that I would place that into a wider programme which, in the language of our new Hexi approach, is called an assembly. Astute readers may also have spotted a developing theme in both the banner pictures and the opening picture. The former takes books and libraries as a theme and gradually moves through more structure to a card index. A form of metadata that may be unfamiliar to younger readers but which had high utility in its days. I’ve argued that one of the key aspects of knowledge management is creating channels through which knowledge can flow, regardless of whether you know what it is or not. So a knowledge map should not only tell you what the various features are but also the nature of the landscape.

Yesterday I established the basis for knowledge mapping, namely to ask a meaningful question in a meaningful context. I promised that I would place that into a wider programme which, in the language of our new Hexi approach, is called an assembly. Astute readers may also have spotted a developing theme in both the banner pictures and the opening picture. The former takes books and libraries as a theme and gradually moves through more structure to a card index. A form of metadata that may be unfamiliar to younger readers but which had high utility in its days. I’ve argued that one of the key aspects of knowledge management is creating channels through which knowledge can flow, regardless of whether you know what it is or not. So a knowledge map should not only tell you what the various features are but also the nature of the landscape.

Stream two: creating the dependency grid (horizontal on Hexi map)

Stream two: creating the dependency grid (horizontal on Hexi map) Action phase

Action phase