Menu

I’m still working through possible material for next weeks training programme in Zurich. One thing I want to tackle in more depth (which I didn’t mention in yesterday’s post) is the difference between design thinking as it is popularly understood and complexity informed design thinking. More on that tomorrow, but for today I have been playing with the overall model of decision making. This involves going a little beyond the basic Cynefin framework dynamics and the portfolio of safe-to-fail experiments. It is, in part, further development of the core processes around complexity based strategy; in part the need to explain Cynefin approaches in the context of conventional management thinking. As such it is a work in progress so I expect it will change before next week and then again after. Like most complexity thinkers I reject cartesian thinking but I still have a soft spot for cogito ergo sum especially when it is translated as I am thinking, therefore I exist; so in this, I am ….

I start with a key principle linked to the danger of premature convergence (along with retrospective coherence and pattern entrainment) namely the importance of description. The more I can describe without interpretation or evaluation the better, as more possibilities open up and I ass/u/me less. With SenseMaker® when people use the signifiers as abstract descriptions without evaluation (and it can be a struggle even with experienced consultants to make them do that); we reduce evaluation and more possibilities open up as a result. The more you approach the data as an expert the more you view material through an instrumentalist perspective. in effect you pre-form what you see by the unarticulated hypotheses of your expertise and/or ideology. Those seeking to do good are often the worst by the way, as their claims of innocence in this regard normally manifest as a state of constant moral outrage.

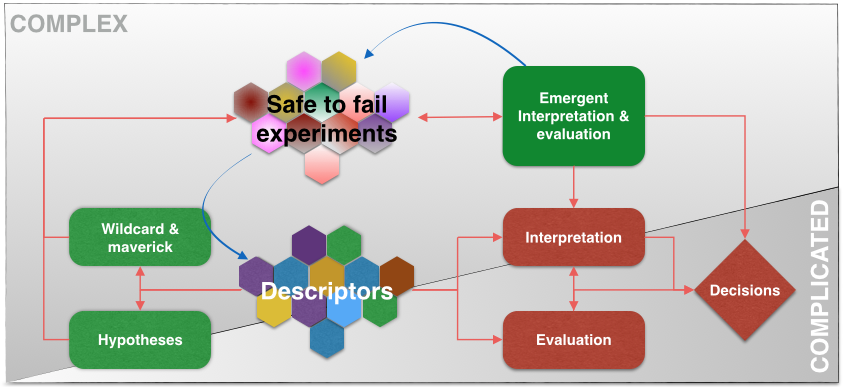

So in the picture below we start in the centre with multiple fragmented descriptors of a situation. Those might come from workshops and be pasted to a wall, or via SenseMaker® in large volume in a landscape picture. Whatever, the point is that you want as many descriptions of a situation (not a problem) as you can get, from as many perspectives as possible. Those always sit on the boundary between complex and complicated and we then have to decide which way to go.

So step one involves looking at the descriptors as a whole. We then identify what aspects are complicated and which are complex. (If you don’t know about narrative based definition of models for decision support then you need to come on day two of the course as that is when we teach it, and/or look up four points methods for creating Cynefin). Those aspects that are complicated can then be subject to interpretation and evaluation, ideally in parallel with one informing and changing the other, all evidence based, before a decision or set of decisions are made. Remember that no action is also a decision. I am introducing some non-linearity by co-evolving interpretation and evaluation but that type of thinking was around before complex adaptive systems thinking. I used it in my MBA thesis oh so many decades ago (creating decision support systems in international organisations if you didn’t know).

On the complex side we start to look for coherent hypotheses about what might be interesting to try out, which is very definitely not different hypotheses of interpretation or evaluation. It’s all about things that we would do. If you have any sense you also let few a few wild cards or maverick solutions just for the hell of it. In a portfolio you can get away with that even with the most conservative of senior managers. Remember that the key heuristic for mapping something as complex is that the evidence supports multiple hypotheses or ideas for intervention so we need to reflect that diversity.

All of those coherences then get some resource or funding to run multiple parallel safe-to-fail experiments, some of which have to be naive, some oblique and so on (day one of the course). I tried to use shades of grey there to illustrate the pain of ritual dissent but it needs pointing out so it may not have been a good idea! Those then move into an emergent process of interpretation and evaluation. In practice some experiments succeed self-evidently as some fail, others fail but in interesting ways, others merge and mutate and sustain. Loose coupling and giving space for emergence are key here. The blue lines in the main diagram then show how the issues may stay in the complex domain, generating more safe-to-fail experiments.  If you have used SenseMaker® to record and monitor the experiments (which I recommend) then they become new descriptors and the whole complex side of this becomes really messy in the best tradition of creativity and adaptability. It’s less messy on my diagram as I am trying to make a point!

If you have used SenseMaker® to record and monitor the experiments (which I recommend) then they become new descriptors and the whole complex side of this becomes really messy in the best tradition of creativity and adaptability. It’s less messy on my diagram as I am trying to make a point!

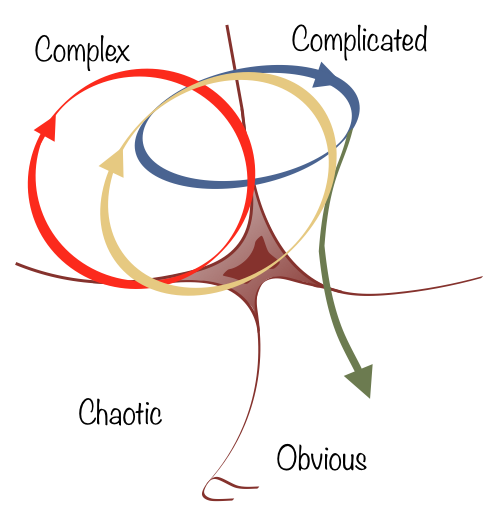

Cynefin is normally explained with three dynamics and we can now add a fourth:

I hope that makes sense, any and all comments welcome. I’m working more generally on the dynamics for Zurich as well as I want to show them on the domain maps so that the different representations all tie together.

Cognitive Edge Ltd. & Cognitive Edge Pte. trading as The Cynefin Company and The Cynefin Centre.

© COPYRIGHT 2024

As people on the mailing list will know we've made some changes to the training ...

Late this morning I sat in The Dome Brighton to hear the words Anthropology of Development ...